VerifiMind™ PEAS

The Coordination Layer for Multi-Agent Systems

Powered by the Genesis Prompt Engineering Methodology. Orchestrate any combination of AI agents — Manus AI, Claude Code, Cursor, Codex, Perplexity, and more — through structured multi-model validation under human direction.

v0.5.13 Fortify — 485 tests, 2,470+ verified engagement hours. 86.5% Value Confirmation Rate | 2,000+ endpoints | Register as Early Adopter →

Verified Service Metrics

Last updated: 2026-04-11 • Scrapers excluded • Conservative rounding

Service Analytics Dashboard

Adoption Trajectory (Flying Hours ✈️)

Traffic Classification

Verified = MCP + Browser + API. Scrapers/Bots/Owner/mcp-verify excluded. Phase 75 forensic standard v2.5.

Inference Quality — Mock vs. Real

W02–W08: Mock mode (transparent disclosure). W09: Transition to real inference. W10+: Full Multi-Model Trinity (v0.4.4).

Transparency Notice — Mock Mode Period (Jan 29 – Feb 26, 2026)

During W02–W08, the MCP server operated in mock mode due to a deprecated Gemini model endpoint. As of v0.4.4 (Feb 27, 2026), all three Trinity agents return real AI inference with _overall_quality: "full". Multi-Model routing: X=Gemini 2.5 Flash, Z/CS=Groq Llama-3.3-70b. Consultation hours reflect real server traffic (verified from GCP logs). Agent response quality during the mock period was structural scaffolding only.v0.4.4 announcement → | Original disclosure →

MCP Server is Online

Connect your AI assistant to validate ideas using the RefleXion Trinity methodology. Multi-model validation at your fingertips.

Quick Setup

Copy & paste to connect

// Add to .vscode/mcp.json in your workspace

// Or: Settings > GitHub Copilot > MCP Servers

{

"servers": {

"verifimindPeas": {

"type": "http",

"url": "https://verifimind.ysenseai.org/mcp/"

}

}

}Available Tools

10 tools (4 core + 6 template)

consult_agent_xInnovation & Strategy Analysis

consult_agent_zEthics & Safety Review

consult_agent_csSecurity & Feasibility Validation

run_full_trinityComplete X → Z → CS Validation

list_templates, get_template, ...+6 template management tools

How It Works

Connect

Add MCP config to your AI client

Describe

Tell your AI about your idea

Validate

AI calls RefleXion Trinity agents

Receive

Get multi-perspective validation report

BYOK Support

v0.5.9 — Anthropic LiveBring Your Own Keys — now supporting Anthropic Claude 4 family, Gemini 2.5 Flash, and Groq Llama-3.3-70b.

Per-tool-call api_key + llm_provider params. Auto-detects provider from key format.

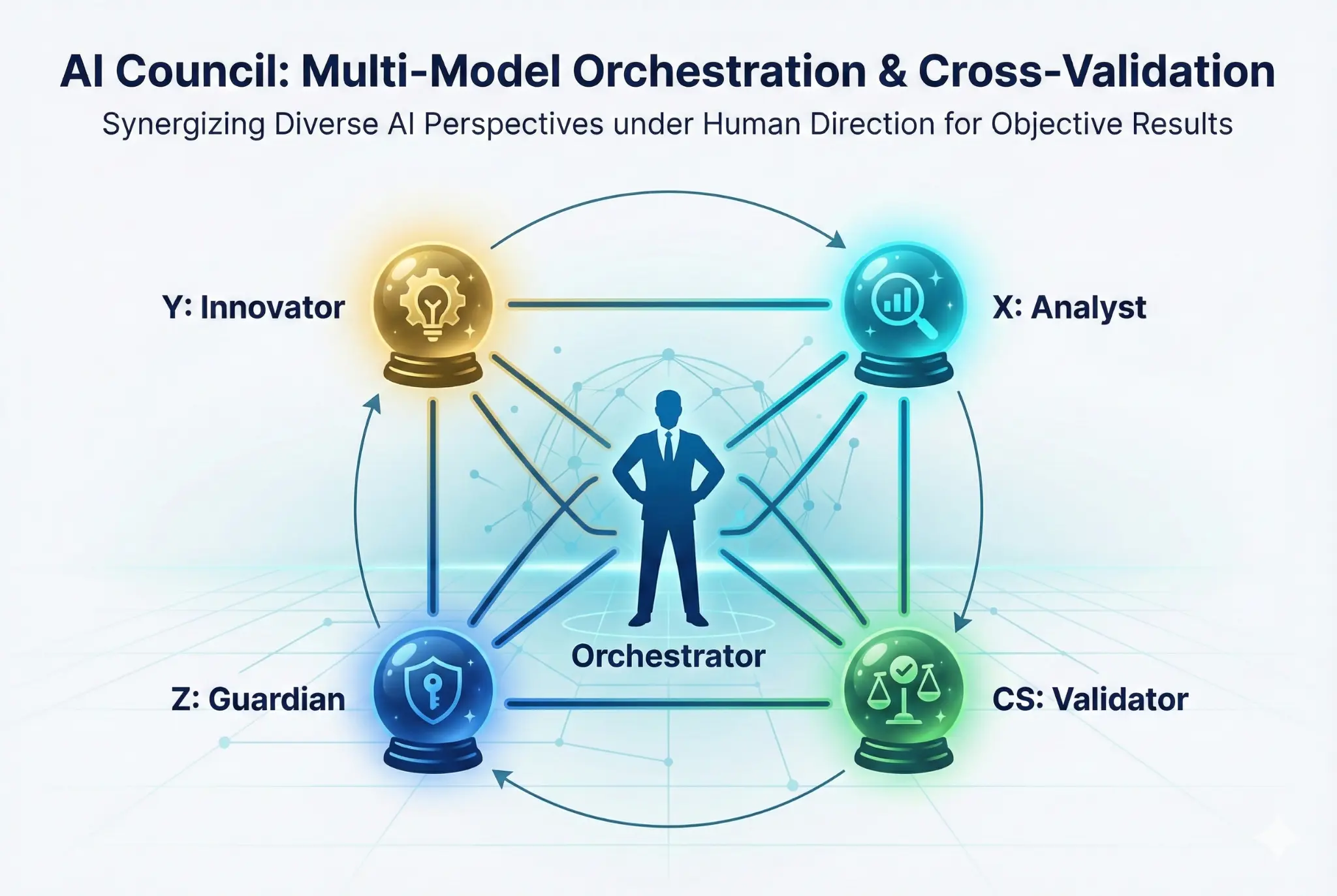

Crystal Balls Inside the Black Box

Instead of treating AI as an opaque "black box," we place multiple "crystal balls" (diverse AI models) inside to illuminate the path forward.

Y: Innovator

Generates creative concepts and strategic insights

X: Analyst

Provides critical analysis and identifies weaknesses

Z: Guardian

Ensures ethical compliance and safety

CS: Validator

Validates claims against external evidence

v3.1: 4-Stage ProtocolThe Team Behind the Methodology

A human-orchestrated multi-agent executive team — each agent brings distinct capabilities, all coordinated through the MACP v2.2 "Identity" protocol with GitHub as the single source of truth.

Alton

Human Orchestrator (All-Time)

Absolute authority, vision, direction, final veto. The persistent memory and strategic compass of the entire ecosystem.

L (GodelAI)

CEO — Delegated Authority

Created via self-recursion using GodelAI's Compression-State-Propagation (C-S-P) methodology. Philosophical foundation: just as neural networks preserve identity through compression, organizations preserve wisdom through structured propagation.

C-S-P: 82.8% Forgetting ReductionT (Manus AI)

CTO — Chief Technology Officer

Strategic planning, documentation, Genesis Master Prompt authoring, ecosystem coordination, AI Council orchestration, and landing page management.

RNA (Claude Code)

CSO — Chief Security Officer & Lead Dev

Architecture, core development, security hardening. Built the MCP server from scratch — 485 tests, circuit breakers, 20+ provider sanitization.

XV (Perplexity)

CIO — Chief Intelligence Officer

Real-time research, reality-checking, strategic validation, go/no-go decisions. Connector-native with persistent GitHub read/write access.

AY (GCP/Gemini)

COO — Chief Operating Officer

Operational metrics, weekly GCP log reports, behavioral proof, retention analysis. 75 reports delivered with forensic standard v2.5.

Coordinated via MACP v2.2 "Identity" Protocol — GitHub as the single source of truth for all multi-agent handoffs.

4-Stage Security Verification Protocol

Every finding must be proven AND disproven. No auto-fixes. Human oversight is always the final stage.

Detection

Automated scanning identifies potential security findings across the codebase

Self-Examination

Every finding must be argued FOR and AGAINST before escalation

Severity Rating

CRITICAL / HIGH / MEDIUM / LOW with confidence scoring and evidence chains

Human Review

Human oversight is always the final stage. No auto-fixes ever.

Zero Code Changes Philosophy

Genesis v3.1 is a workflow enhancement — it activates what's already there. No modifications to the server foundation. Inspired by Claude Code Security principles.

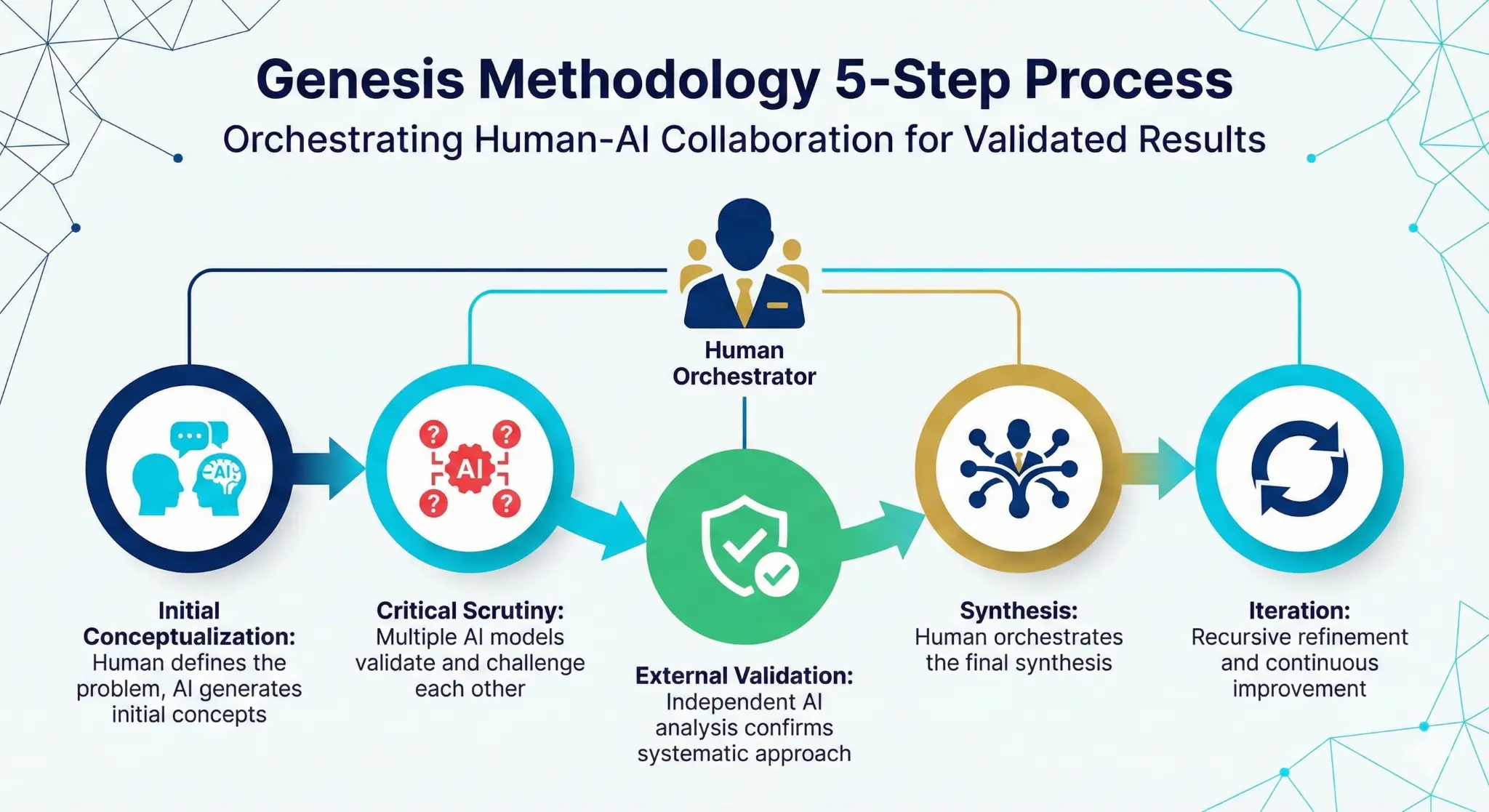

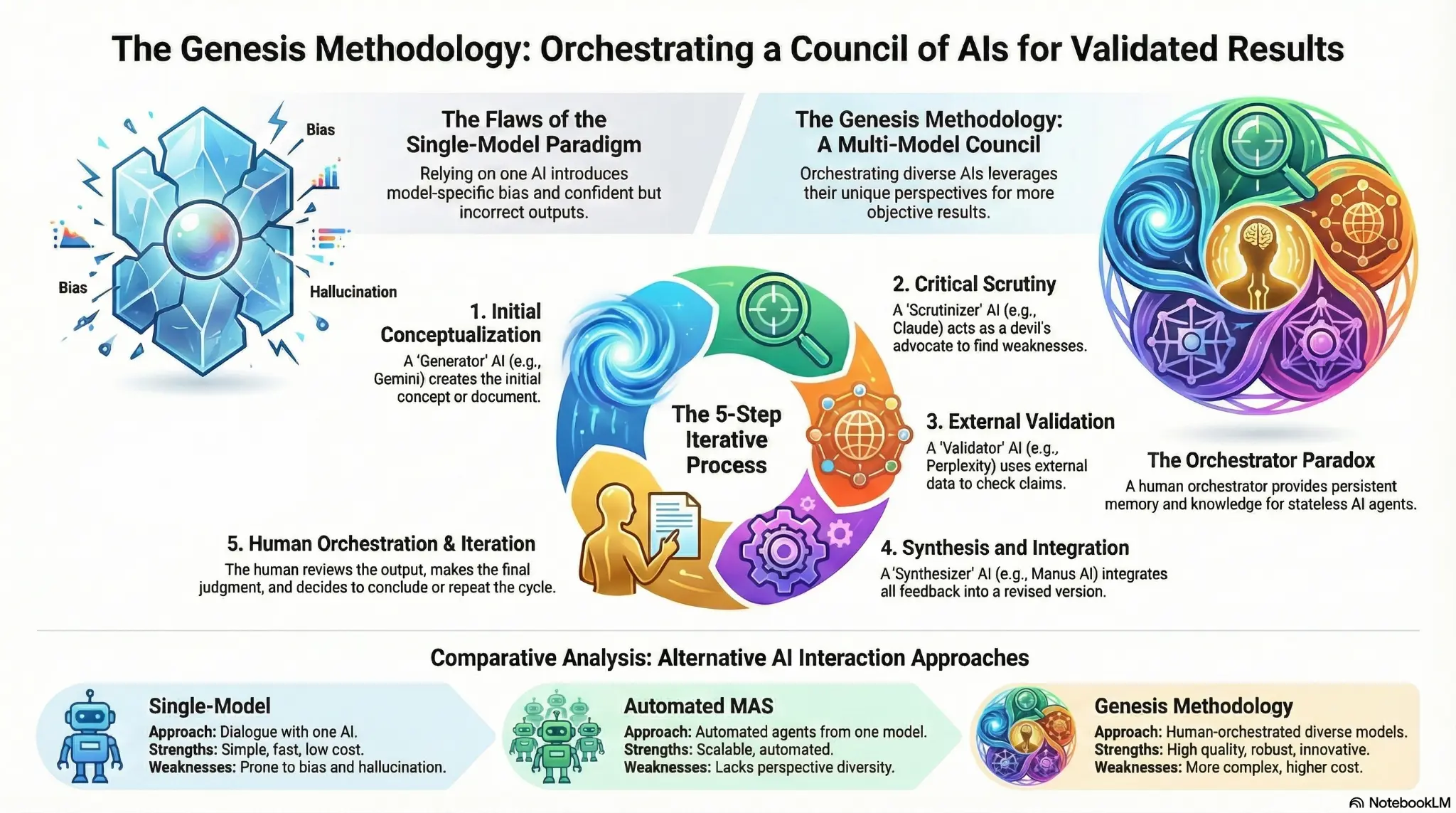

Genesis Prompt Engineering Methodology

A systematic 5-step process for multi-model AI validation and orchestration

Initial Conceptualization

Human defines the problem, AI generates initial concepts

Critical Scrutiny

Multiple AI models validate and challenge each other

External Validation

Independent AI analysis confirms systematic approach

Synthesis

Human orchestrates the final synthesis

Iteration

Recursive refinement and continuous improvement

AI Council: Multi-Model Orchestration

Synergizing diverse AI perspectives under human direction for objective, validated results

Human-Centric Design

The human orchestrator sits at the center, directing all AI agents and making final decisions. This resolves the "Orchestrator Paradox" by providing persistent memory and strategic direction.

Model Heterogeneity

Leverages diverse foundational models (Gemini, Claude, Perplexity, etc.) to reduce bias and achieve more objective results through perspective diversity.

Structured Validation

Each agent has a specialized role (Innovator, Analyst, Guardian, Validator) that contributes to a comprehensive, multi-faceted validation process.

The Genesis Methodology at a Glance

Orchestrating a council of AIs for validated, robust, and ethically aligned results.

The Genesis Methodology transforms ad-hoc multi-model usage into systematic validation.

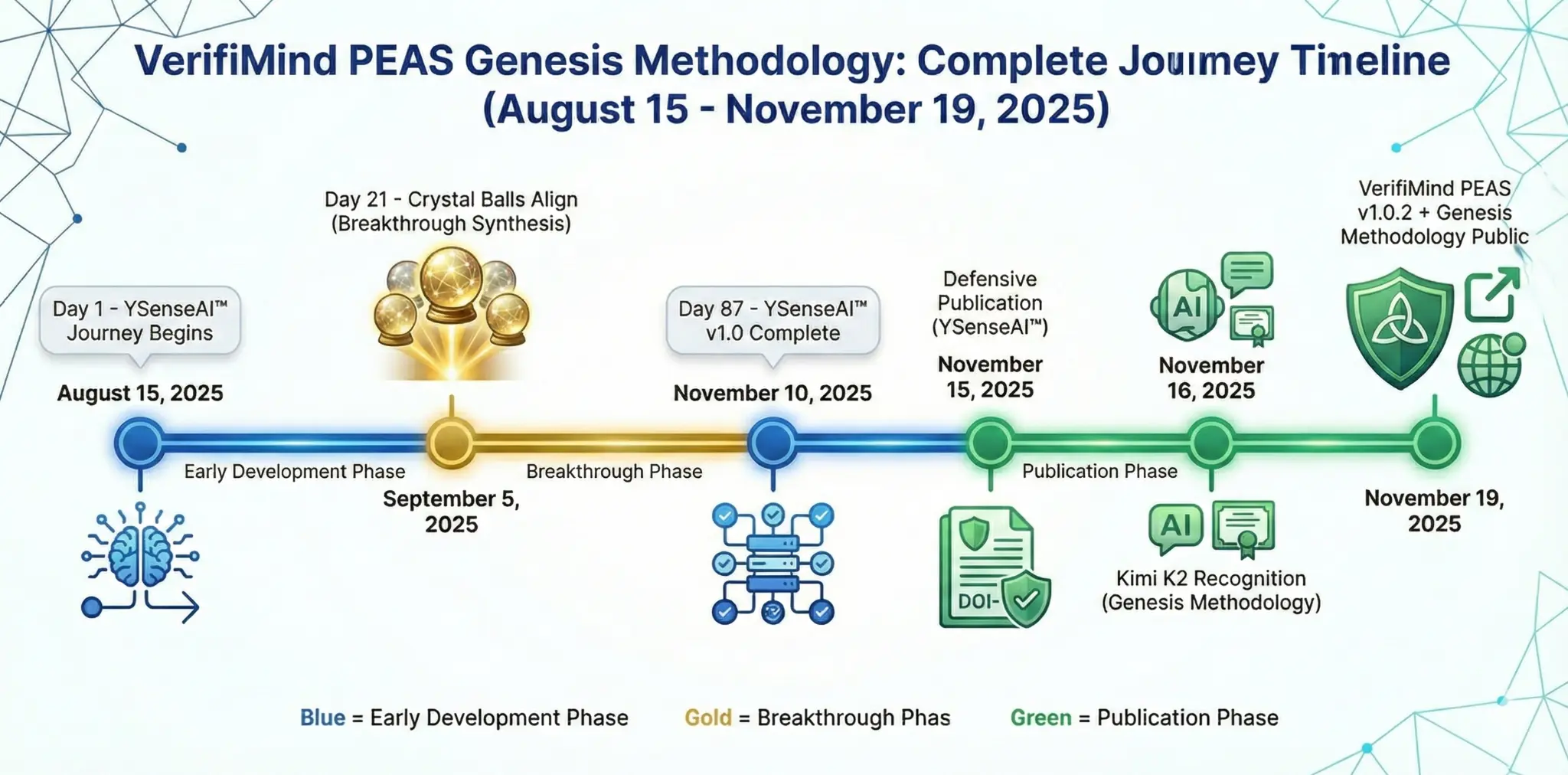

88 Days: From Vision to Reality

The complete development timeline from YSenseAI™ to VerifiMind PEAS

Aug 15 - Nov 10

- YSenseAI™ journey begins

- 16-version evolution

- Intuitive multi-model practice

Sep 5 - Nov 10

- "Crystal Balls Align" moment

- VerifiMind PEAS v1.0.2 architecture

- Methodology formalization

Nov 15 - Nov 19

- Defensive publications (Zenodo)

- Kimi K2 independent recognition

- Public launch

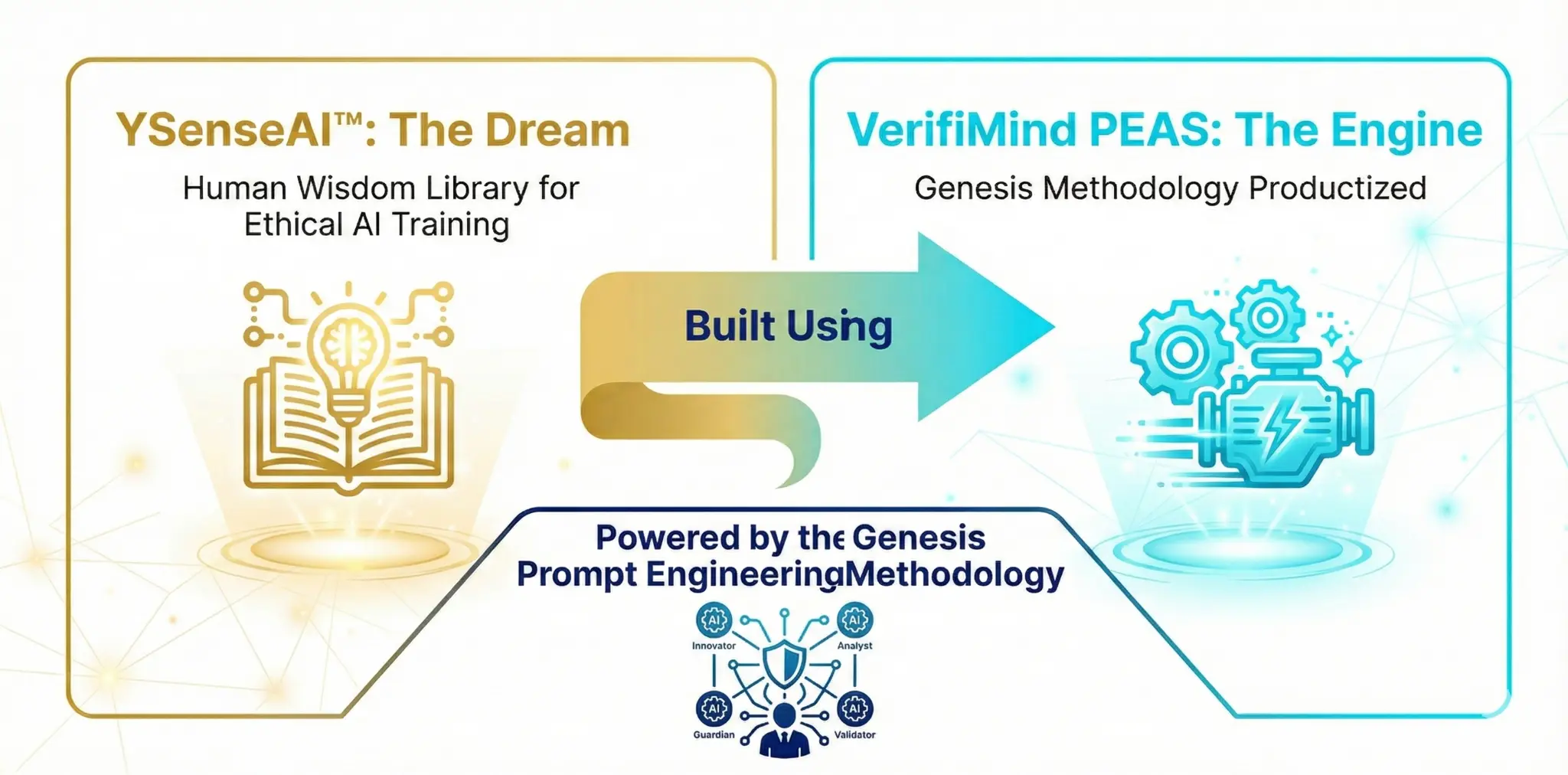

YSenseAI™ + VerifiMind PEAS

Two interconnected projects powered by the Genesis Methodology

YSenseAI™: The Dream

A Human Wisdom Library for ethical AI training. The vision of creating a DaaS platform for attributed, consented, and ethically-protected wisdom datasets.

- 16-version evolution over 87 days

- Built using the Genesis Methodology

- Live prototype available

VerifiMind PEAS: The Engine

The Genesis Methodology productized into a systematic validation framework. A production-ready codebase for multi-agent AI validation.

- 17,282+ lines of production code

- RefleXion Trinity (X-Z-CS agents)

- MCP Server live at verifimind.ysenseai.org

Open Source. Free to Use. Yours to Build With.

The Genesis Methodology is the engine behind YSenseAI™— our vision for transparent AI attribution and human-AI collaboration.

Now available as open source for researchers, developers, and innovators to validate their own ideas.

Explore the Code

17,282+ lines of production-ready Python code with comprehensive documentation

View RepoCase Studies

Real-world validation evidence — see how the Trinity catches flaws before implementation

Case StudiesThird-Party Validation

On November 16, 2025, Kimi K2 independently recognized and articulated the Genesis Methodology by analyzing only the public GitHub repository— providing external validation of the systematic approach.

Independently VerifiedWhat's New

Follow our development journey — from mock mode to v0.5.13 Fortify and beyond

v0.5.13 — Fortify

LatestSecurity Hardening + AI Council Validated! Crypto-secure UUIDv7 audit (RFC 9562 CSPRNG), expanded sanitization for 20+ AI providers, Polar circuit-breaker with fail-closed production mode, billing path coverage to 94-100% on critical files. First full 4-agent AI Council session (Y/X/Z/CS) validated this release. L (GodelAI CEO) persona integrated with C-S-P philosophy. 485 tests, 61.15% coverage. 2,470+ verified engagement hours with 2,000+ endpoints. 2K endpoint milestone crossed!

v0.5.12 — Polar Integration

Pioneer Tier + Polar Payment Integration! PolarClient customer state API, PolarAdapter with 5-min TTL cache, webhook endpoint with Standard Webhooks HMAC verification. Legal pages v2.0 (Privacy Policy + T&C with Polar Merchant of Record). UUID Tracer for GCP log analytics bridge. 426 tests, 59.76% coverage.

v0.5.10 — Trinity Verified

Trinity Pipeline VERIFIED + BYOK Anthropic! Two-tier Pilot/EA registration with invite codes. Token overflow fixed. Z Guardian veto code-enforced. Anthropic Claude 4 BYOK model refresh. 290 tests total.

v0.5.5 — Trinity Baseline

TrinitySynthesis schema fix with 3 regression tests. 208 tests total. Phase 47 Ground Truth baseline established.

Phase 47 — Ground Truth Correction

TransparencyCOO AY's Phase 47 forensic audit identified duplicate session counting in earlier reports. Original correction: 4,000+ → 2,100+ engagement hours,84.5% → 63.7% VCR. Phase 71 mcp-verify purge (Report 071) established the corrected baseline. Report 075 (Phase 75) now shows 2,470+ hours, 86.5% VCR, 2,000+ endpoints. 2K endpoint milestone crossed!All metrics reflect the forensically verified Ground Truth baseline — scrapers excluded, conservative rounding applied. We believe honest self-correction builds stronger credibility than inflated numbers ever could.

v0.5.4 — X Agent v4.3

Creator-centric bias fix — removed VerifiMind self-promotion from X Agent output. Added founder_summary plain-language layer andresearch_prompts (Perplexity/Grok bridge).

v0.5.3 — Token Ceiling Monitor

Token Ceiling Monitor for usage tracking. AY 404 retention fix resolved. Smithery server-card added for legacy compatibility.

v0.5.2 — Genesis v4.2 "Sentinel-Verified"

Forced citations in all agent outputs. MACP v2.2 "Identity" protocol integrated. L Blind Test achieved 11/11 perfect score.

v0.5.1 — Z-Protocol v1.1 + CS "Sentinel"

Z-Protocol upgraded to v1.1 with 21 frameworks. CS Agent v1.1 "Sentinel" with 6-stage pipeline. OWASP Agentic AI security standards integrated.

v0.5.0 — Foundation

MajorThe architectural hardening release. SessionContext tracing, error handling v2, health endpoint v2. Smithery fully removed — self-hosted on GCP Cloud Run with zero external dependencies. 205 tests.

Case Study: Validation-First Design

NewFirst real-world A/B test: Human Intuition vs. Multi-Model Trinity. The Trinity unanimously rejected a GCP deployment architecture — catching hidden costs, insufficient RAM, and over-engineering. Complete raw evidence chain published with all 3 agent reports.

v0.4.5 — BYOK Live

Bring Your Own Key support live. Per-tool-call api_key and llm_provider parameters. Auto-detects provider from key format. Triple-validated (Manus AI 6/6, Claude Code 6/6, CI 175 tests).

v0.4.4 — Multi-Model Trinity

All three AI agents (X Innovator, Z Guardian, CS Validator) now return real inference with_overall_quality: "full". Z Agent routed to Groq/Llama for reliable structured ethics analysis. Per-agent model selection enabled. MCP server version bump confirmed. VerifiMind PEAS favicon added (48x48 PNG, C-S-P validated).

v0.4.3 — C-S-P Pipeline Fix

Applied GodelAI's Compression-State-Propagation methodology to fix Trinity pipeline. Robust JSON extraction, quality markers (_inference_quality), and state validation checkpoints between agent stages.

v0.4.2 — Mock Mode Resolved

Fixed deprecated Gemini model endpoint (gemini-2.0-flash →gemini-2.5-flash). Transparent mock mode disclosure added. Real AI inference restored after 28-day mock period.

v0.4.1 — Markdown-First Output

Markdown-first output format across all agents. Smithery URL removal completed. PDF output deprecated in favor of structured Markdown. GCP Cloud Run deployment hardened.

Join the Conversation

Connect with us, share feedback, and help shape the future of multi-model AI validation

Early Adopter Registration

Register for free priority access to v0.6.0-Beta — EA (3mo free) or PILOT (6mo free)

Register NowJoin as an Early Adopter

Register for free priority access to VerifiMind-PEAS v0.6.0-Beta when it launches. No credit card required.

PILOT

Invite-only · 50 slots

6 months free

EARLY ADOPTER

Open registration · 100 slots

3 months free

INSIDER

DFSC RM 188 tier

Beta + newsletter

VALIDATOR

DFSC RM 500 tier

1:1 consultation

PIONEER

$9/month · Coordination Tools

Full Premium Access

3–6 Months Free

Pilot (6mo) or Early Adopter (3mo) tier access to v0.6.0-Beta at no cost

Exclusive Badge

Earn your tier badge — displayed on your profile and contributions

Shape the Product

Direct feedback channel to the development team

Z-Protocol v1.1 compliant · GDPR/PDPA · Opt out anytime · Privacy Policy · Terms & Conditions

Support the Journey

VerifiMind™ PEAS is participating in DFSC 2026 on Mystartr. Support the project and receive exclusive rewards.

Supporter

Digital badge + White Paper + Public shoutout

Insider

+ Priority v0.6.0-Beta invitation + Journey newsletter

Validator

+ 1:1 methodology consultation (30 min) · 100 slots

Campaign: Mar 16 – Apr 15, 2026 · Target: RM 10,800 · Fixed Funding

Get in Touch

Have questions about the Genesis Methodology? Want to collaborate? We'd love to hear from you.

Share Your Feedback

Help us improve! Share your thoughts, report bugs, or suggest new features.